Choosing the right GPU for deep learning on AWS

Illustration by author

Just a decade ago, if you wanted access to a GPU to accelerate your data processing or scientific simulation code, you’d either have to get hold of a PC gamer or contact your friendly neighborhood supercomputing center. Today, you can log on to your AWS console and choose from a range of GPU based Amazon EC2 instances.

What GPUs can you access on AWS you ask? You can launch GPU instances with different GPU memory sizes (8 GB, 16 GB, 24 GB, 32 GB, 40 GB), NVIDIA GPU generations (Ampere, Turing, Volta, Maxwell, Kepler) different capabilities (FP64, FP32, FP16, INT8, Sparsity, TensorCores, NVLink), different number of GPUs per instance (1, 2, 4, 8, 16), and paired with different CPUs (Intel, AMD, Graviton2). You can also select instances with different vCPUs (core thread count), system memory and network bandwidth and add a range of storage options (object storage, network file systems, block storage, etc.) — in summary, you have options.

My goal with this blog post is to provide you with guidance on how you can choose the right GPU instance on AWS for your deep learning projects. I’ll discuss key features and benefits of various EC2 GPU instances, and workloads that are best suited for each instance type and size. If you’re new to AWS, or new to GPUs, or new to deep learning, my hope is that you’ll find the information you need to make the right choice for your projects.

Topics covered in this blog post:

- Key recommendations for the busy data scientist/ML practitioner

- Why you should choose the right GPU instance not just the right GPU

- Deep dive on GPU instance types: P4, P3, G5 (G5g), G4, P2 and G3

- Other machine learning accelerators and instances on AWS

- Cost optimization tips when using GPU instances for ML

- What software and frameworks to use on AWS?

- Which GPUs to consider for HPC use-cases?

- A complete and unapologetically detailed spreadsheet of all AWS GPU instances and their features

Key recommendations for the busy data scientist/ML practitioner

In a hurry? just want the final recommendation without the deep dive? I got you covered. Here are 5 GPU instance recommendations that should serve majority of deep learning use-cases. However, I do recommend you come back and review the rest of the article so you can make a more informed decision.

1. Highest performing multi-GPU instance on AWS

Instance: p4d.24xlargeWhen to use it: When you need all the performance you can get. Use it for distributed training on large models and datasets.

What you get: 8 x NVIDIA A100 GPUs with 40 GB GPU memory per GPU. Based on the latest NVIDIA Ampere architecture. Includes 3rd generation NVLink for fast multi-GPU training.

2. Highest performing single-GPU instance on AWS:

Instance: p3.2xlarge

When to use it: When you want the highest performance Single GPU and you’re fine with 16 GB of GPU memory. What you get: 1 x NVIDIA V100 GPU with 16 GB of GPU memory. Based on the older NVIDIA Volta architecture. The best performing single-GPU is still the NVIDIA A100 on P4 instance, but you can only get 8 x NVIDIA A100 GPUs on P4. This GPU has a slight performance edge over NVIDIA A10G on G5 instance discussed next, but G5 is far more cost-effective and has more GPU memory.

3. Best performance/cost, single-GPU instance on AWS

Instance: g5.xlargeWhen to use it: When you want high-performance, more GPU memory at lower cost than P3 instance What you get: 1 x NVIDIA A10G GPU with 24 GB of GPU memory, based on the latest Ampere architecture. NVIDIA A10G can be seen as a lower powered cousin of the A100 on the p4d.24xlarge so it’s easy to migrate and scale when you need more compute. Consider larger sizes withg5.(2/4/8/16)xlarge for the same single-GPU with more vCPUs and higher system memory if you have more pre or post processing steps.

4. Best performance/cost, multi-GPU instance on AWS:

Instance: p3.(8/16)xlarge

When to use it: Cost-effective multi-GPU model development and training. What you get: p3.8xlarge has 4 x NVIDIA V100 GPUs and p3.16xlarge has 8 x NVIDIA V100 GPUs with 16 GB of GPU memory on each GPU, based on the older NVIDIA Volta architecture. For larger models, datasets and faster performance consider P4 instances.

5. High-performance GPU instance at a budget on AWS

Instance: g4dn.xlarge

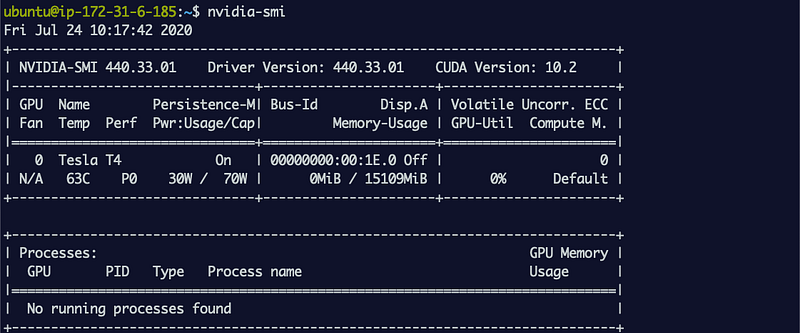

When to use it: Lower performance than other options at lower cost for model development and training. Cost effective model inference deployment. What you get: 1 x NVIDIA T4 GPU with 16 GB of GPU memory. Based on the previous generation NVIDIA Turing architecture. Consider g4dn.(2/4/8/16)xlarge for more vCPUs and higher system memory if you have more pre or post processing.

With that you should have enough information to get started with your project. If you’re still itching to learn more, let’s dive deep and geek out on every instance type, GPU type, their features on AWS and discuss when and why you should consider each of them.

Why you should choose the right “GPU instance” not just the right “GPU”

Or why you should look at the whole system and not just the type of GPU

A GPU is the workhorse of a deep learning system, but the best deep learning system is more than just a GPU. You have to choose the right amount of compute power (CPUs, GPUs), storage, networking bandwidth and optimized software that can maximize utilization of all available resources.

Some deep learning models need higher system memory or a more powerful CPU for data pre-processing, others may run fine with fewer CPU cores and lower system memory. This is why you’ll see many Amazon EC2 GPU instances options, some with the same GPU type but different CPU, storage and networking options. If you’re new to AWS or new to deep learning on AWS, making this choice can feel overwhelming.

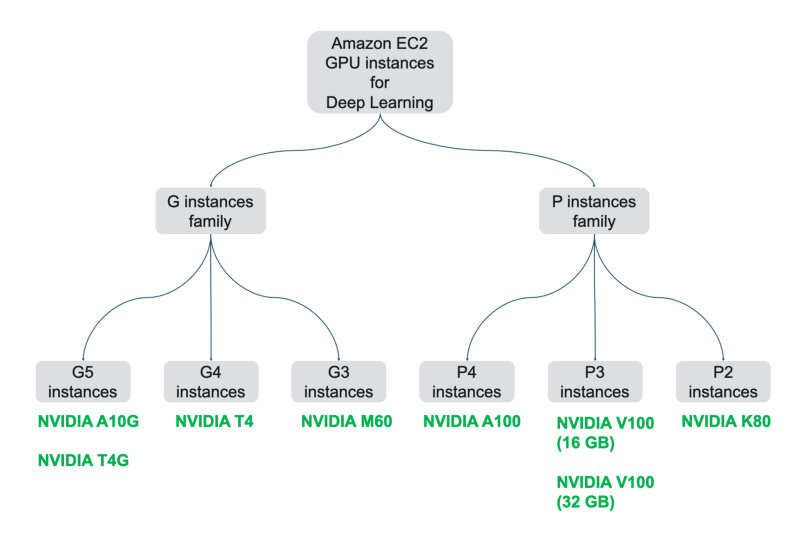

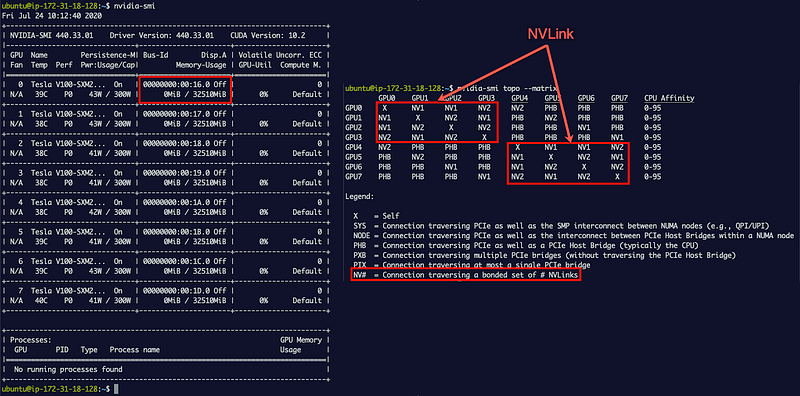

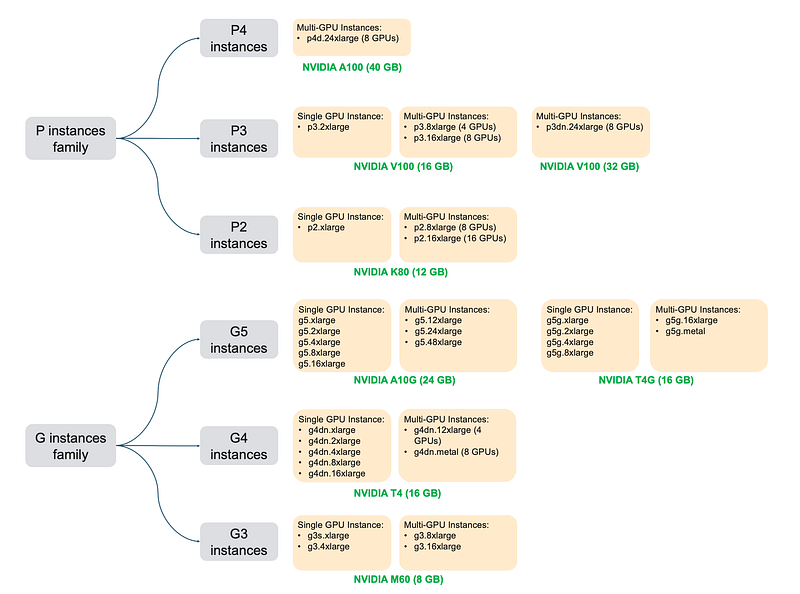

Let’s start with high-level EC2 GPU instance nomenclature on AWS. There are two families of GPU instances — the P family and the G family of EC2 instances and the chart below shows the various instance generations and instance sizes.

Amazon EC2 GPU instances for deep learning

Historically P instance type represented GPUs better suited for High-performance computing (HPC) workloads, characterized by their higher performance (higher wattage, more cuda cores) and support for double precision (FP64) used in scientific computing. G instance types had GPUs better suited for graphics and rendering, characterized by their lack of double precision and lower cost/performance ratio (Lower wattage, smaller number of cuda cores).

All this has started to change as the amount of machine learning workloads on GPUs are growing rapidly in recent years. Today, the newer generation P and G instance types are both suited for machine learning. P instance type is still recommended for HPC workloads and demanding machine learning training workloads and I recommend G instance type for machine learning inference deployments and less compute intensive training. All this will become clearer in the following section when we discuss specific GPU instance types.

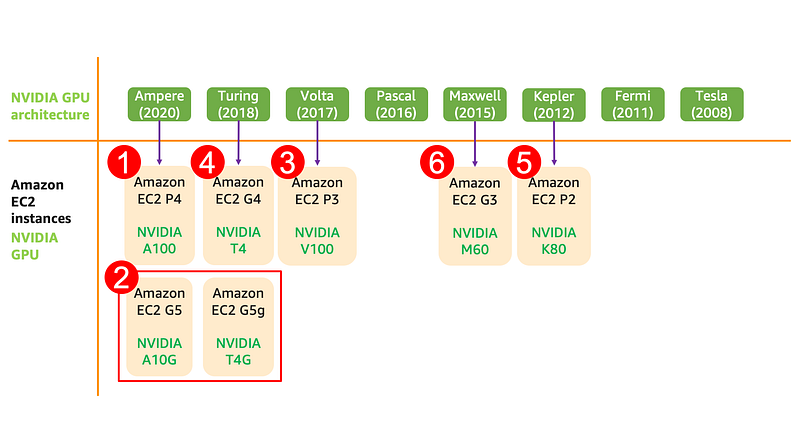

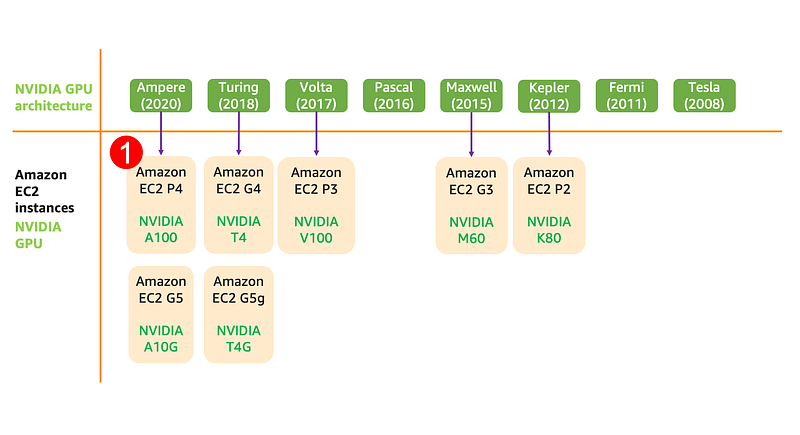

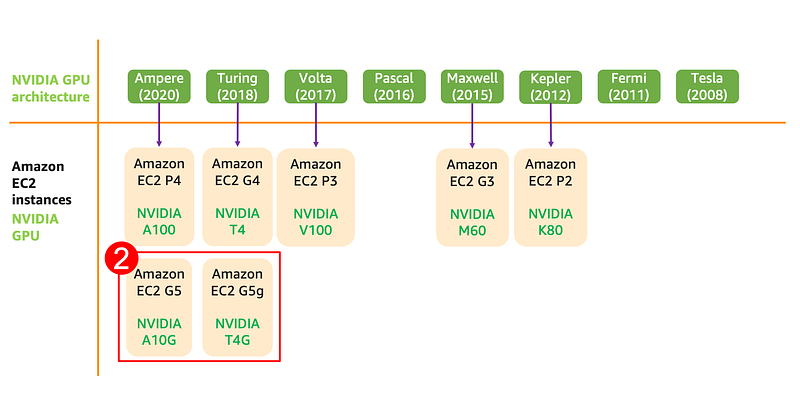

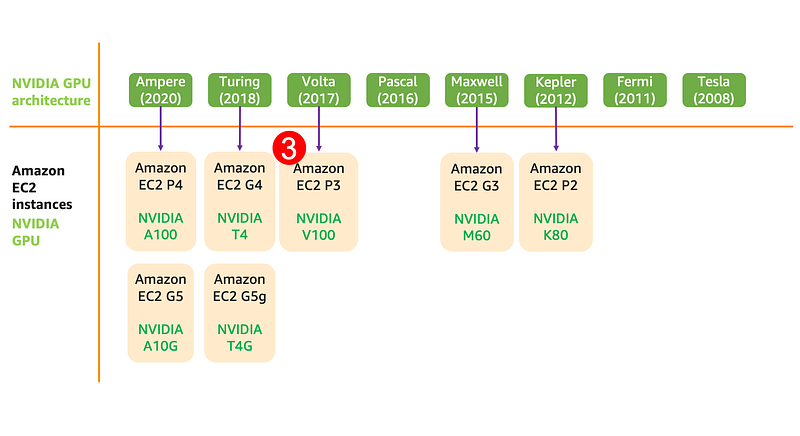

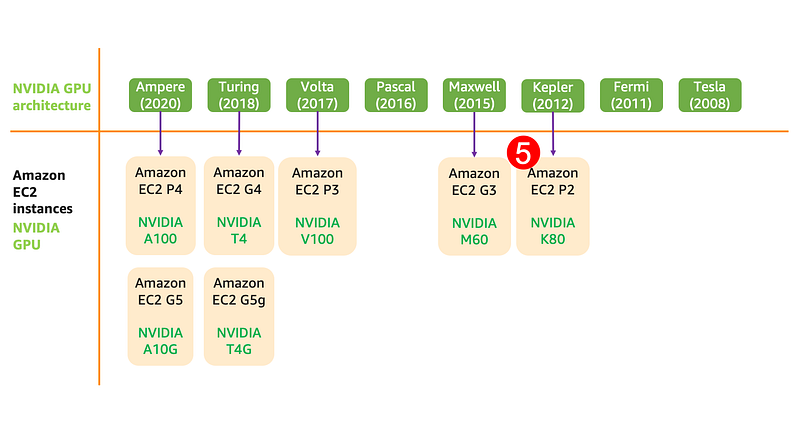

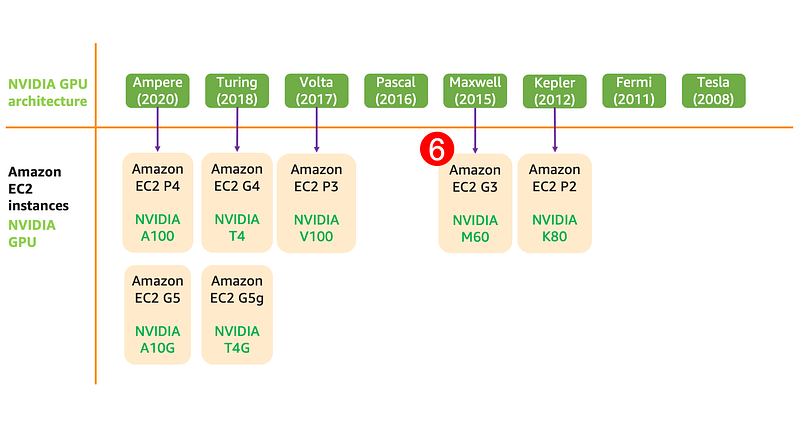

Each instance size has a certain vCPU count, GPU memory, system memory, GPUs per instance, and network bandwidth. The number next to the letter (P3, G5) represent the instance generation. Higher the number, the newer the instance type is. Each instance generation can have GPUs with different architecture and the timeline image below shows NVIDIA GPU architecture generations, GPU types and the corresponding EC2 instance generations.

Now let’s take a look at each of these instances by family, generation and sizes in the order listed below.

We’ll discuss each GPU instance type in the order shown here

Amazon EC2 P4: Highest performing deep learning training GPU instance type on AWS.

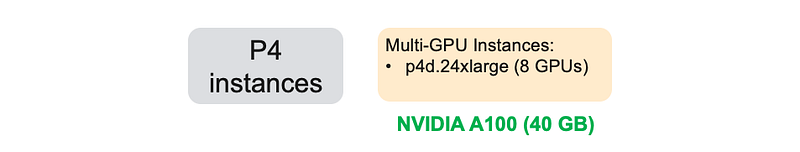

P4 instances provide access to NVIDIA A100 GPUs based on NVIDIA Ampere architecture. It only comes in one size — a multi-GPUs per instance with 8 A100 GPUs with 40 GB of GPU memory per GPU, 96 vCPU, and 400 Gbps network bandwidth for record setting training performance.

P4 instance features at a glance:

- GPU Generation: NVIDIA Ampere

- Supported precision types: FP64, FP32, FP16, INT8, BF16, TF32, Tensor Cores 3rd generation(mixed-precision)

- GPU memory: 40 GB per GPU

- GPU interconnect: NVLink high-bandwidth interconnect, 3rd generation

What’s new in the NVIDIA Ampere based NVIDIA A100 GPU on P4 instances?

Every new GPU generation is faster than the previous generation, and there’s no exception here. NVIDIA A100 is significantly faster than NVIDIA V100 (found on P3 instances discussed later) but also includes newer precision types suited for deep learning, particularly BF16 and TF32.

Deep learning training is typically done in single precision or FP32. The choice of FP32 IEEE standard format pre-dates deep learning, so hardware and chip manufacturers have started to support newer precision types that work better for deep learning. This is a perfect example of hardware evolving to suit the needs of application vs. developers having to change applications to work on existing hardware.

The NVIDIA A100 includes special cores for deep learning called Tensor Cores to run mixed-precision training, which was first introduced in the Volta architecture. Rather than training the model in single precision (FP32), your deep learning framework can use Tensor Cores to perform matrix multiplication in half-precision (FP16) and accumulate in single precision (FP32). This often requires updating your training scripts, but can lead to much higher training performance. Each framework handles this differently, so refer to your framework’s official guides (TensorFlow, PyTorch and MXNet) for using mixed-precision.

The NVIDIA A100 GPU supports two new precision formats — BF16 and TensorFloat-32 (TF32). The advantage of TF32 is that the TF32 Tensor Cores on the NVIDIA A100 can read FP32 data from the deep learning framework and use and produces a standard FP32 output, but internally it uses reduced internal precision. This means that unlike mixed precision training which often required code changes to your training scripts, frameworks like TensorFlow and PyTorch can support TF32 out of the box. BF16 is an alternative to IEEE FP16 standard that has a higher dynamic range, better suited for processing gradients without loss in accuracy. TensorFlow has supported BF16 for a while, and you can now take advantage of BF16 precision on NVIDIA A100 GPU when using p4d.24xlarge instances.

P4 instance come in only 1 size: p4d.24xlarge. Let’s take a closer look.

p4d.24xlarge: Fastest GPU instance in the cloud

If you need the absolutely fastest training GPU instance in the cloud then look no further than the p4d.24xlarge. This title was previously held by the p3dn.24xlarge, which had 8 Volta architecture based NVIDIA V100 GPU.

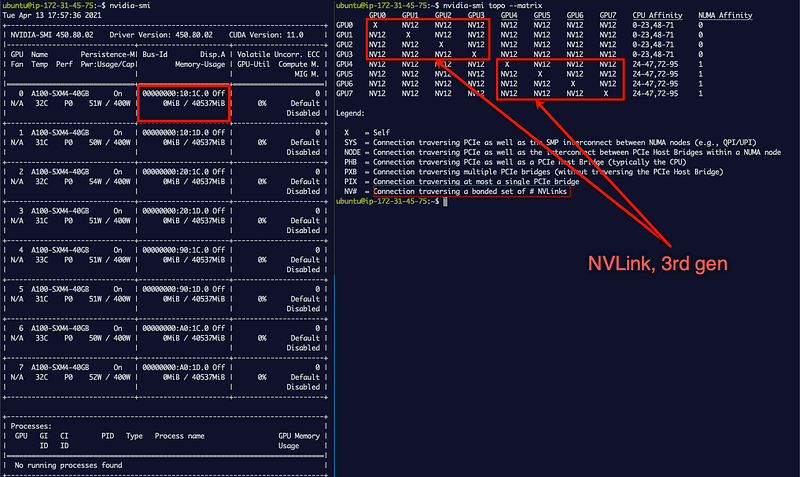

You get access to 8 NVIDIA A100 GPUs with 40 GB GPU memory, interconnected with 3rd generation NVLink that theoretically double the inter-GPU bandwidth compared to the 2nd generation NVLink on the NVIDIA V100 available on the P3 instance type we’ll discuss in the next section. This makes p4d.24xlarge instance type ideal for distributed data parallel training as well as model-parallel training of large models that don’t fit on a single GPU. The instance also gives you access to 96 vCPUs, 1152 GB system memory (highest ever on a EC2 GPU instance) and 400 Gbps network bandwidth (highest ever on a EC2 GPU instance) which becomes important for achieving near-linear scaling for large-scale distributed training jobs.

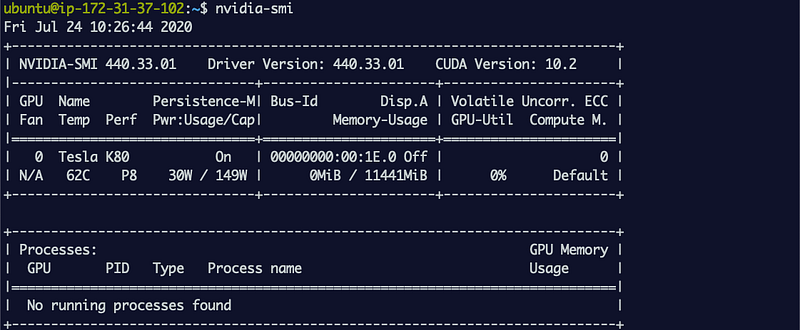

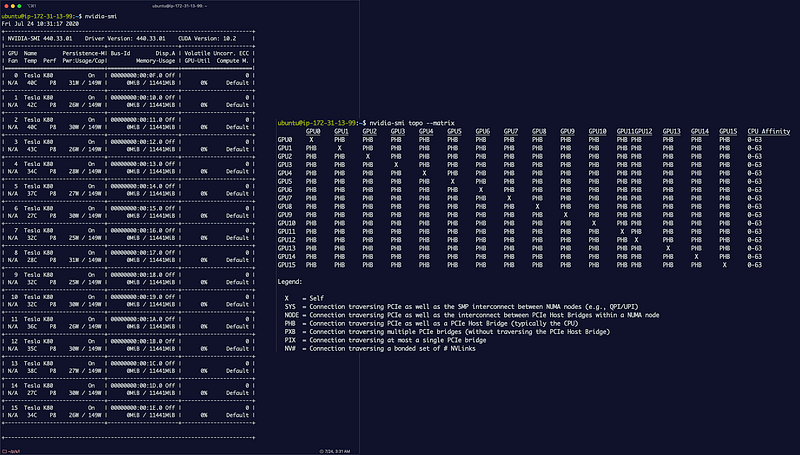

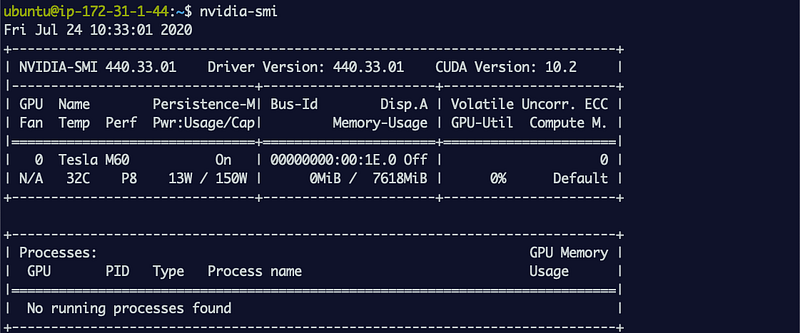

Runnvidia-smi on this instance and you can see that the GPU memory is 40 GB. This is the largest GPU memory per GPU, you’ll find on AWS today. If your models are large or you’re working on 3D images or other large data batches, then this is the instance to consider. Run nvidia-smi topo -matrix and you’ll see that NVLink is used for between-GPU communication. NVlink provides much higher inter-GPU bandwidth compared to PCIe and this means that multi-GPU and distributed training jobs will run much faster.

Amazon EC2 G5: Best performance per cost single-GPU instance and multi-GPU instance options for inference deployment

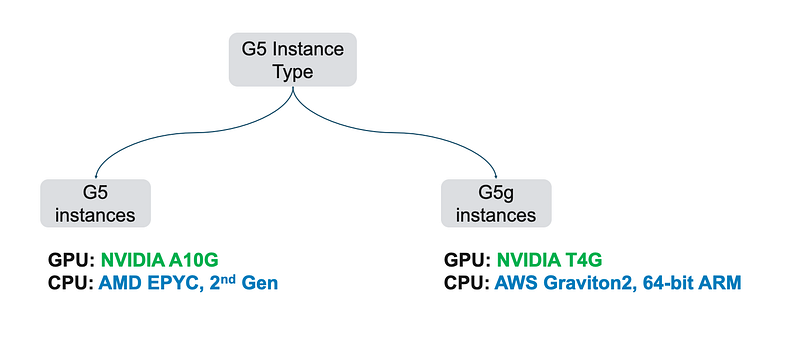

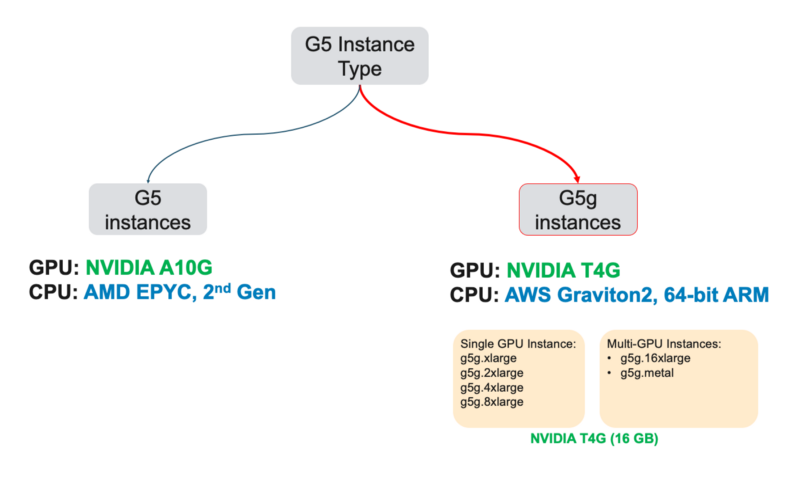

G5 instances are interesting as there are two types of NVIDIA GPUs under this instance type. This is a departure from all other instance types which have a 1:1 relationship between EC2 instance type and GPU architecture type.

G5 instance type has two different sub categories with different CPU and GPU types

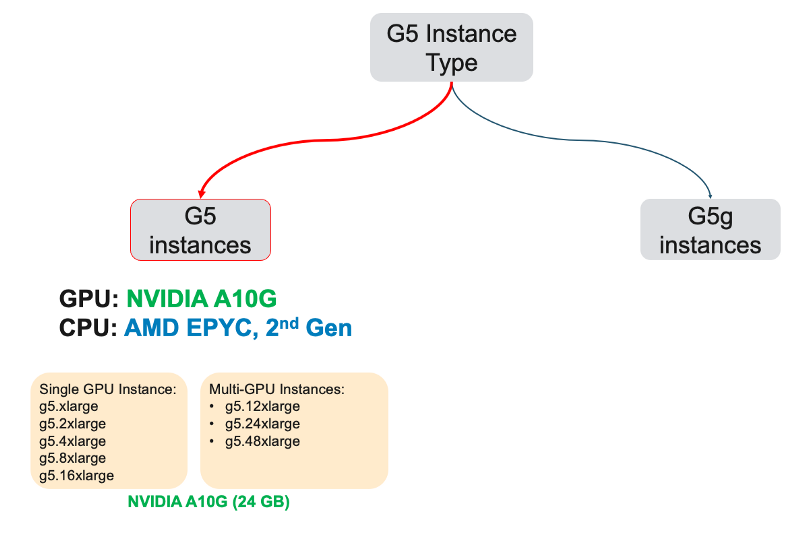

And they each come in different instance sizes that include single and multi-GPU instances.

First let’s take a look at G5 instance type and particularly g5.xlargeinstance size which I discussed in the Key takeaway/recommendation list at the beginning.

G5 instances: Best performance per cost single-GPU instances on AWS

G5 instance features at a glance:

- GPU Generation: NVIDIA Ampere

- Supported precision types: Supported precision types: FP64, FP32, FP16, INT8, BF16, TF32, Tensor Cores 3rd generation(mixed-precision)

- GPU memory: 24 GB

- GPU interconnect: PCIe

What do you get with G5?

The GPU instances:g5.(2/4/8/16)xlarge offer the best performance per cost ratio for single-GPU instance on AWS. Start with g5.xlarge as your single GPU model development, prototyping and training instance. You can increase the size to g5.(2/4/8/16).xlarge for more vCPUs and system memory to better handle data pre and post processing that rely on CPU power. You can get access to single and multi-GPU instance sizes (4 GPUs, 8 GPUs). The single GPU options g5.(2/4/8/16)xlarge with NVIDIA A10G offer the best performance/cost profile for training and inference deployment.

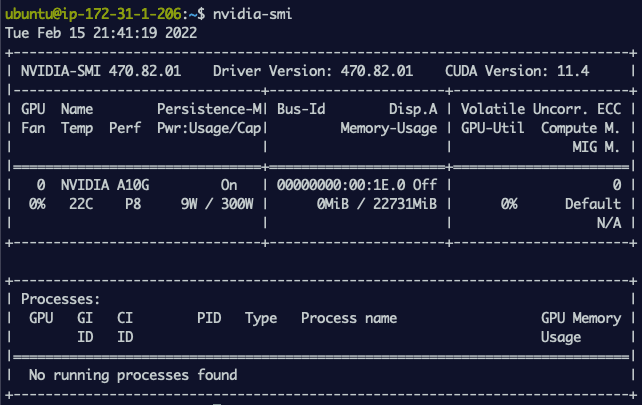

If you take a look at the output of nvidia-smi for g5.xlarge instance you’ll see that the Thermal Design Power (TDP), which is the maximum power the GPU can draw, is 300W. Compare this with the output of nvidia-smi shown in the P4 section above which shows a TDP of 400W. This makes NVIDIA A10G in the G5 instances is a lower powered cousin of the NVIDIA A100 found on the P4 instance type. Since it’s also based on the same NVIDIA Ampere architecture and this means it includes all the features supported on the P4 instance type.

This makes G5 instances perfect for single GPU training and migrating your training workload to P4 if your models and data size grows and you need to do distributed training or if you want to run multiple parallel training experiements on a faster GPU.

Output of nvidia-smi on g5.xlarge

Although the you get access to multi-GPU instance sizes, I do not recommend them for multi-GPU distributed training, since there is no NVIDIA high-bandwidth NVLink GPU interconnect, and communication will fall back to PCIe which is significantly slower. The multi-GPU options on G5 are meant to host multiple models on each GPU for inference deployment use-cases.

G5g instances: Good performance and cost-effective GPU instance, if you’re ok with an ARM CPU

G5g instance features at a glance:

- GPU Generation: NVIDIA Turing

- Supported precision types: Supported precision types: FP32, FP16, Tensor Cores (mixed-precision), INT8

- GPU memory: 16GB

- GPU interconnect: PCIe

What do you get with G5g?

Unlike G5 instances, G5g instance offers NVIDIA T4G GPUs which are based on the older NVIDIA Turing architecture. NVIDIA T4G GPU’s closest cousin is the NVIDIA T4 GPU available on the Amazon EC2 G4 instance that I’ll discuss in the next section. The key difference between the G5g instance and G4 instance is interestingly the choice of CPU.

The G5g instance offers an ARM based AWS Graviton2 CPU, and

The G4 instance offers x86 based Intel Xeon Scalable CPU.

The GPUs (T4 and T4g) are very similar in performance profiles

our choice between these two should come down to the CPU architecture you prefer. My personal preference for machine learning today would be the G4 instance over the G5g instance since more open-source frameworks are designed to run on Intel CPUs vs ARM based CPUs.

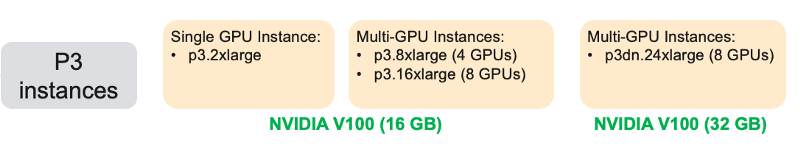

Amazon EC2 P3: Highest performance single GPU instance and cost effective multiple GPUs instance options on AWS

P3 instances provide access to NVIDIA V100 GPUs based on NVIDIA Volta architecture and you can launch a single GPU per instance or multiple GPUs per instance (4 GPUs, 8 GPUs). A single GPU instance p3.2xlarge can be your daily driver for deep learning training. And the most capable instance p3dn.24xlarge gives you access to 8 x V100 with 32 GB GPU memory, 96 vCPUs, 100 Gbps networking throughput ideal for distributed training.

P3 instance features at a glance:

- GPU Generation: NVIDIA Volta

- Supported precision types: FP64, FP32, FP16, Tensor Cores (mixed-precision)

- GPU memory: 16 GB on

p3.2xlarge, p3.8xlarge, p3.16xlarge, 32 GB onp3dn.24xlarge - GPU interconnect: NVLink high-bandwidth interconnect, 2nd generation

The NVIDIA V100 also includes Tensor Cores to run mixed-precision training, but doesn’t offer TF32 and BF16 precision types introduced in the NVIDIA A100 offered on the P4 instance. P3 instances however, come in 4 different sizes from single GPU instance size up to 8 GPU instance size making it the ideal choice flexible training workloads. Let’s take a look at each of the following instance sizesp3.2xlarge, p3.8xlarge, p3.16xlarge and p3dn.24xlarge.

p3.2xlarge: Best GPU instance for single GPU training

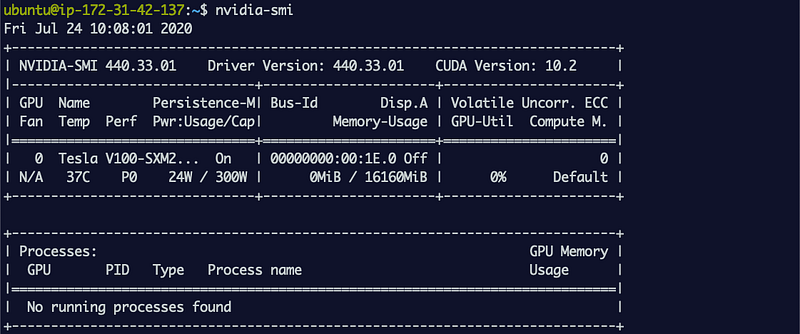

This should be your go-to instance for most of your deep learning training work if you need a single GPU and performance is a priority. G5 instances are more cost effective for slightly lower performance than P3. With p3.2xlarge you get access to one NVIDIA V100 GPU with 16 GB GPU memory, 8 vCPUs, 61 GB system memory and up to 10 Gbps network bandwidth. V100 is the fastest GPU available in the cloud at the time of this writing and supports Tensor Cores that can further improve performance if your scripts can take advantage of mixed-precision training.

If you spin up an Amazon EC2 p3.2xlarge instance and run the nvidia-smi command you can see that the GPU on the instances is a V100-SXM2 version which supports NVLink (we’ll discuss this in the next section). Under Memory-Usage you’ll see that it has 16 GB GPU memory. If you need more than 16 GB of GPU memory for large models or large data sizes then you should consider p3dn.24xlarge (more details below).

p3.8xlarge and p3.16xlarge: Ideal GPU instance for small-scale multi-GPU training and running parallel experiments

If you need more GPUs for experimenting, more vCPUs for data pre-processing and data augmentation, or higher network bandwidth consider p3.8xlarge (with 4 GPUs) and p3.16xlarge (with 8GPUs). Each GPU is an NVIDIA V100 with 16 GB memory. They also include NVLink interconnect for high-bandwidth between-GPU communication which will come in handy for multi-GPU training. With p3.8xlarge you get access to 32 vCPUs and 244 GB system memory, and with p3.16xlarge you get access to 64 vCPUs and 488 GB system memory. This instance is ideal for couple of use cases:

Multi-GPU training jobs: If you’re just getting started with multi-GPU training, 4 GPUs on p3.8xlarge or 8 GPUs on a p3.16xlarge can give you a nice speedup. You can also use this instance to prepare your training scripts for much larger-scale multi-node training jobs, which often require you to modify your training scripts using libraries like Horovod, tf.distribute.Strategy or torch.distributed. Refer to my step-by-step guide to using Horovod for distributed training:

Blog post: A quick guide to distributed training with TensorFlow and Horovod on Amazon SageMaker

Parallel experiments: Multi-GPU instances also come in handy when you have to run variations of your model architecture and hyperparameters in parallel, to experiment faster. With p3.16xlarge you can run training on up to 8 variants of your model. Unlike multi-GPU training jobs, since each GPU is running training independently and doesn’t block the use of other GPUs, you can be more productive during the model exploration phase.

p3dn.24xlarge: High-performance and cost effective training

This instance previously held the fastest GPU instance in the cloud title, which now belongs to p4d.24xlarge. That doesn’t make p3dn.24xlarge is a slouch. It is still one of the fastest instance types you can find on the cloud today and is much more cost-effective compared to P4 instance. You get access to 8 NVIDIA V100 GPUs, but unlike p3.16xlarge which have 16 GB of GPU memory, the GPUs on p3dn.24xlarge have 32 GB GPU memory. This means you can fit much larger models and train on much larger batch sizes. The instance gives you access to 96 vCPUs, 768 GB system memory and 100 Gbps network bandwidth which becomes important for achieving near-linear scaling for large-scale distributed training jobs.

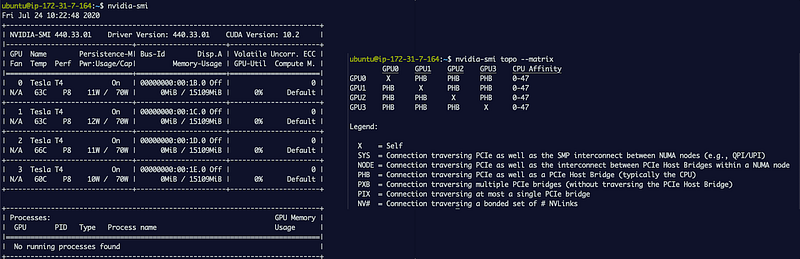

Run nvidia-smi on this instance and you can see that the GPU memory is 32 GB. The only instance with more GPU memory than this is the p4d.24xlarge which as am A100 GPU with 40 GB of GPU memory. If your models are large or you’re working on 3D images or other large data batches, then this is the instance to consider. Run nvidia-smi topo — matrix and you’ll see that NVLink is used for between-GPU communication. NVlink provides much higher inter-GPU bandwidth compared to PCIe and this means that multi-GPU and distributed training jobs will run much faster.

Amazon EC2 G4: High-performance single-GPU instances for training and multi-GPU options for cost-effective inference

G4 instances provide access to NVIDIA T4 GPUs based on NVIDIA Turing architecture. You can launch a single GPU per instance or multiple GPUs per instance (4 GPUs, 8 GPUs). In the timeline diagram below, you’ll see that right below G4 instance is G5g instance, which are both based on GPUs with NVIDIA Turing architecture. We already discussed G5g instance type in the earlier section and the GPU in G4 (NVIDIA T4)and G5g (NVIDIA T4G) are very similar in performance. You choice will come down to choice of CPU type on these instances.

The G5g instance offers an ARM based AWS Graviton2 CPU, and

The G4 instance offers x86 based Intel Xeon Scalable CPU.

The GPUs (T4 and T4g) are very similar in performance profiles

In the GPU timeline diagram you can see that NVIDIA Turing architecture came after the NVIDIA Volta architecture and introduced several new features for machine learning like the next generation Tensor Cores and integer precision support which make them ideal for cost effective inference deployments and graphics.

G4 instance features at a glance:

- GPU Generation: NVIDIA Turing

- Supported precision types: FP64, FP32, FP16, Tensor Cores (mixed-precision), INT8, INT4, INT1

- GPU memory: 16 GB

- GPU interconnect: PCIe

What’s new in the NVIDIA T4 GPU on G4 instances?

NVIDIA Turing was the first to introduce support for integer precision (INT8) data type, that can significantly accelerate inference throughput. During training, model weights and gradients are typically stored in single precision (FP32). As it turns out, to run predictions on a trained model, you don’t actually need full precision, and you can get away with reduced precision calculations in either half precision (FP16) or 8 bit integer precision (INT8). Doing so gives you a boost in throughput, without sacrificing too much accuracy. There will be a some drop in accuracy, and how much depends on various factors specific to your model and training. Overall, you get the best inference performance/cost with G4 instances compared to other GPU instances. NVIDIA’s support matrix shows what neural network layers and GPU types support INT8 and other precision for inference.

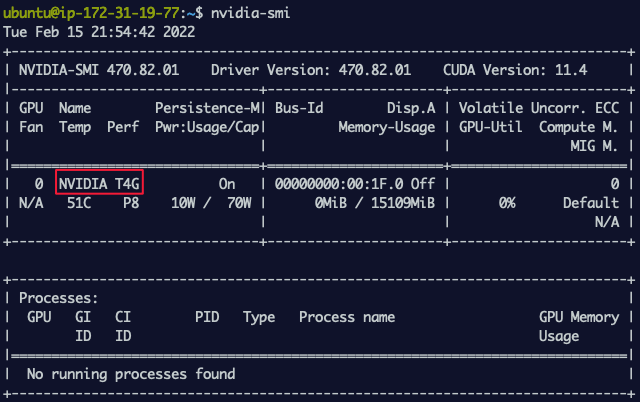

NVIDIA T4 (and NVIDIA T4G) are the lowest powered GPUs on any EC2 instance on AWS. Run nvidia-smi on this instance and you can see that the g4dn.xlarge has a NVIDIA T4 GPU with 16 GB of GPU memory. You’ll also notice that the power cap is 70W compared to 300W on NVIDIA A10G.

The following instance sizes all give you access to single NVIDIA T4 GPU with increasing number of vCPUs, system memory, storage and network bandwidth: g4dn.xlarge (4 vCPU, 16 GB system memory), g4dn.2xlarge (8 vCPU, 32 GB system memory), g4dn.4xlarge (16 vCPU, 64 GB system memory), g4dn.8xlarge (32 vCPU, 128 GB system memory), g4dn.16xlarge (64 vCPU, 256 GB system memory). You can find the full list of differences on the product G4 instance page under the Product Details section.

G4 instance sizes also include two multi-GPU configurations: g4dn.12xlarge with 4 GPUs and g4dn.metal with 8 GPUs. However, if your use case is multi-GPU or multi-node/distributed training, you should consider using P3 instances. Run nvidia-smi topo --matrix on a multi-GPU g4dn.12xlarge instance and you’ll see that the GPUs are not connected by high-bandwidth NVLink GPU interconnect. P3 multi-GPU instances include high-bandwidth NVLink interconnects that can speed up multi-GPU training.

Amazon EC2 P2: Cost-effective for HPC workloads, NO LONGER recommend for only-ML workloads

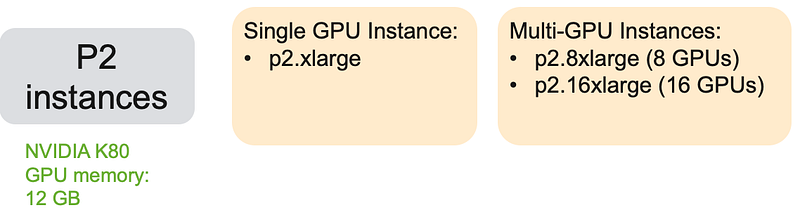

P2 instances give you access to NVIDIA K80 GPUs based on the NVIDIA Kepler architecture. Kepler architecture is a few generations old (Kepler -> Maxwell -> Pascal -> Volta -> Turing), therefore they’re not the fastest GPUs around. They do have some specific features such as full precision (FP64) support that makes them attractive and cost-effective for high-performance computing (HPC) workloads that rely on the extra precision. P2 instances come in 3 different sizes: p2.xlarge (1 GPU), p2.8xlarge (8 GPUs), p2.16xlarge (16 GPUs).

The NVIDIA K80 is an interesting GPU. A single NVIDIA K80 is actually two GPUs on a physical board, which NVIDIA calls dual-GPU design. What this means is that, when you launch an instance of p2.xlarge, you’re only getting one of those two GPUs on a physical K80 board. Similarly, when you launch a p2.8xlarge you’re getting access to eight GPUs on four K80 GPUs, and with p2.16xlarge you’re getting access to sixteen GPUs on eight K80 GPUs. Run nvidia-smi on a p2.xlarge and what you see is one of the two GPUs on an NVIDIA K80 board and it has 12 GB of GPU memory

P2 instance features at a glance:

- GPU Generation: NVIDIA Kelper

- Supported precision types: FP64, FP32

- GPU memory: 12 GB

- GPU interconnect: PCIe

So, should I even use the P2 instances for deep learning?

No, there are better options discussed above. Prior to the launch of Amazon EC2 G4 and G5 instances, the P2 instances were the recommended cost-effective deep learning training instance type. Since the launch of G4 instances, I recommend G4 as the go-to cost-effective training and prototyping GPU instance for deep learning training. P2 continues to be cost-effective for HPC workloads in scientific computing, but you’ll miss out on several new features such as support for mixed-precision training (Tensor Cores) and reduced precision inference, which have become a standard on newer generations.

If you run nvidia-smi on the p2.16xlarge GPU instance, since NVIDIA K80 has a dual-GPU design, you’ll see 16 GPUs which are part of 8 NVIDIA K80 GPUs. This is the most number of GPUs you can get on a single instance on AWS. If you runnvidia-smi topo --matrix, you’ll see that the all inter-GPU communications are through PCIe, unlike P3 multi-GPU instances which use the much faster NVLink.

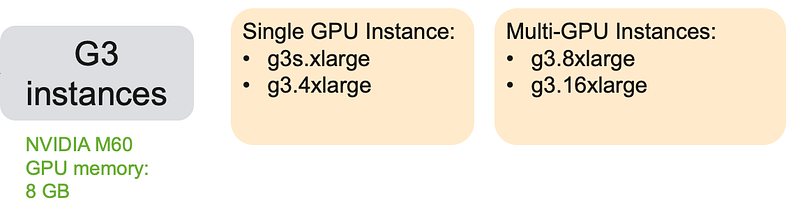

Amazon EC2 G3: NO LONGER recommended for only-ML workloads

G3 instances give you access to NVIDIA M60 GPUs based on the NVIDIA Maxwell architecture. NVIDIA refers to the M60 GPUs as virtual workstations and positions them for professional graphics. However, with much more powerful and cost-effective options for deep learning with P3, G4, G5, G5g instances, G3 is not a recommended option for deep learning. I’ve only included it here for some history and sake of completeness.

G3 instance features at a glance:

- GPU Generation: NVIDIA Maxwell

- Supported precision types: FP32

- GPU memory: 8 GB

- GPU interconnect: PCIe

Should you consider G3 instances for deep learning?

Prior to the launch of Amazon EC2 G4 instances, single GPU G3 instances were cost effective to develop, test and prototype. And although the Maxwell architecture is more recent than NVIDIA K80’s Kepler architectures found on P2 instances, you should still consider P2 instances before G3 for deep learning. Your choice order should be P3 > G4 > P2 > G3.

G3 instances come in 4 sizes, g3s.xlarge and g3.4xlarge (2 GPU, different system configuration) g3.8xlarge (2 GPUs) and g3.16xlarge (4 GPUs). Run nvidia-smi on a g3s.xlarge and you’ll see that this instances gives you access to an NVIDIA M60 GPU with 8 GB GPU memory.

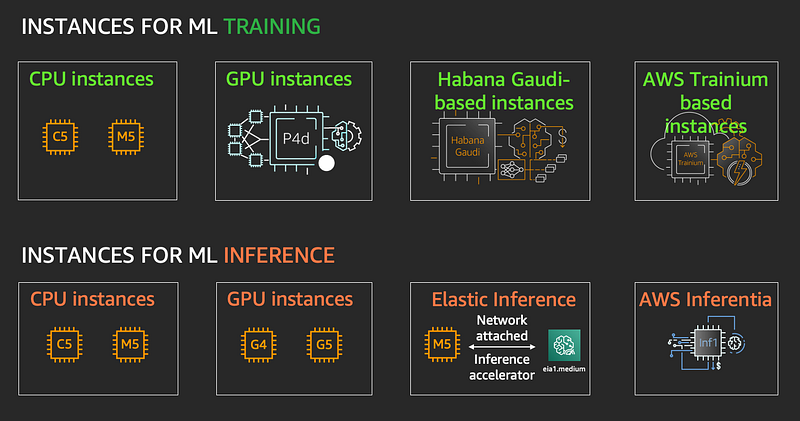

Other machine learning instance options on AWS

NVIDIA GPUs are no doubt a staple for deep learning, but there are other instance options and accelerators on AWS that may be the better option for your training and inference workloads.

- CPU: For training traditional ML models, prototyping and inference deployment

- Intel Habana-Gaudi based DL1 instances: With 8 x Gaudi accelerators you can use this as an alternative to P3dn and P4d GPU instances for training

- Amazon EC2 Trn1 instances: With upto 16 x AWS Trainium chips you can use this as an alternative to P3dn, P4d and DL1 instances for training

- AWS Elastic Inference: Save costs for inference workloads by leveraging EI to add just the right amount of GPU acceleration to your CPU instances discussed in this blog post

- Amazon EC2 Inf1 instances: With up to 16 x AWS Inferentia chips with 4 Neuron cores on each chip, this is a powerful and cost-effective options for inference deployment and is discussed in further detail on this blog post.

For a detailed discussion on inference deployment options please refer to blog post on choosing the right AI accelerators for inference:

Cost optimization tips when using GPU instances for ML

You have a few different options to optimize the cost of your training and inference workloads.

Spot instances

Spot-instance pricing makes high-performance GPUs much more affordable and allows you to access spare Amazon EC2 compute capacity at a steep discount compared to on-demand rates. For an up-to-date list of prices by instance and Region, visit the Spot Instance Advisor. In some cases you can save over 90% on your training costs, but your instances can be preempted and be terminated with just 2 mins notice. Your training scripts must implement frequent checkpointing and ability to resume training once Spot capacity is restored.

Amazon SageMaker managed training

During the development phase much of your time is spent prototyping, tweaking code and trying different options in your favorite editor or IDE (which is obvious VIM) — all of which don’t need a GPU. You can save costs by simply decoupling your development and training resources and Amazon SageMaker will let you do this easily. Using the Amazon SageMaker Python SDK you can test your scripts locally on your laptop, desktop, EC2 instance or SageMaker notebook instance.

When you’re ready to train, specify what GPU instance type you want to train on and SageMaker will provision the instances, copy the dataset to the instance, train your model, copy results back to Amazon S3, and tear down the instance. You are only billed for the exact duration of training. Amazon SageMaker also supports managed Spot Training for additional convenience and cost savings.

Here’s my guide: A quick guide to using Spot instances with Amazon SageMaker

Use just the required amount of GPU with Amazon Elastic Inference

Save costs for inference workloads by leveraging EI to add just the right amount of GPU acceleration to your CPU instances discussed in this blog post: A complete guide to AI accelerators for deep learning inference — GPUs, AWS Inferentia and Amazon Elastic Inference

Optimize for cost by improving utilization

- Optimize your training code to take full advantage of P3, G4 and G5 instances Tensor Cores by enabling mixed-precision training. Every deep learning framework does this differently and you’ll have to refer to the specific framework’s documentation.

- Use reduce precision (INT8) inference on G4 and G5 instance types to improve performance. NVIDIA’s TensorRT library provides APIs to convert single precision models to INT8, and provides examples in their documentation.

What software to use on Amazon EC2 GPU instances?

Downloading your favourite deep learning framework is easy right? Just pip install XXX or conda install XXX or docker pull XXX and you’re all set right? Not really, frameworks you install from upstream repositories are often not optimized for the target hardware they’ll run on. These frameworks are built to support a wide range of diverse CPU and GPU types, so they will support lowest common denominator of features and performance optimization, which can lead to substantially degraded performance on your AWS GPU instance.

For this reason, I highly recommend using AWS Deep Learning AMIs or AWS Deep Learning Containers (DLC) instead. AWS qualifies and tests them on all Amazon EC2 GPU instances, and they include AWS optimizations for networking, storage access and the latest NVIDIA and Intel drivers and libraries. Deep learning frameworks have upstream and downstream dependencies on higher level schedulers and orchestrators and lower-level infrastructure services. By using AWS AMIs and AWS DLCs you know it’s been tested end-to-end and is guaranteed to give you the best performance.

Which GPUs to consider for HPC use-cases?

High-performance Computing (HPC) is another scientific domain that relies on GPUs to speed up computation for simulation, data processing and visualization. While deep learning training can be done on lower precision arithmetic from FP32 (single precision) down to FP16 (half precision) and variations such as Bfloat16 and TF32, HPC applications need to high-precision arithmetic up to FP64 (double precision). The NVIDIA A100, V100 and K80 GPUs support FP64 precision and these are available on P4, P3 and P2 instances respectively.

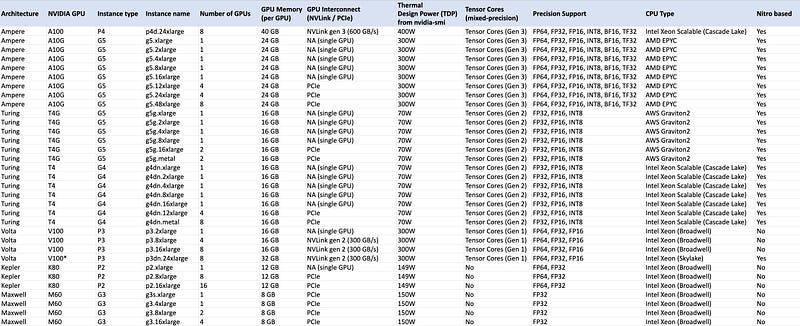

A complete and unapologetically detailed spreadsheet of all GPU instances and their features

In today’s “I put this together because I couldn’t find one already” contribution, I present to you a GPUs on AWS features list. I often want to know how much memory is on a specific GPU or if a specific precision type is supported on a GPU, or if the instance has an Intel, AMD or Graviton CPU etc. before I launch a GPU instance. To avoid having to go through various webpages and NVIDIA white papers, I’ve painstakingly compiled all the information into a table. You can use the image below or go right to the markdown table embedded at the end of the post and hosted on GitHub, your choice. Enjoy!

GPU features at a glance

Do you prefer consuming content in a graphical format, I got you covered there too! The following image shows all the GPU instance types and sizes on AWS. There isn’t enough space for all the features, for that I still recommend the spreadsheet.

Hey there! thanks for reading!

Thank you for reading. If you found this article interesting, consider giving this an applause and following me on medium. Please also check out my other blog posts on medium or follow me on twitter (@shshnkp), LinkedIn or leave a comment below. Want me to write on a specific machine learning topic? I’d love to hear from you!